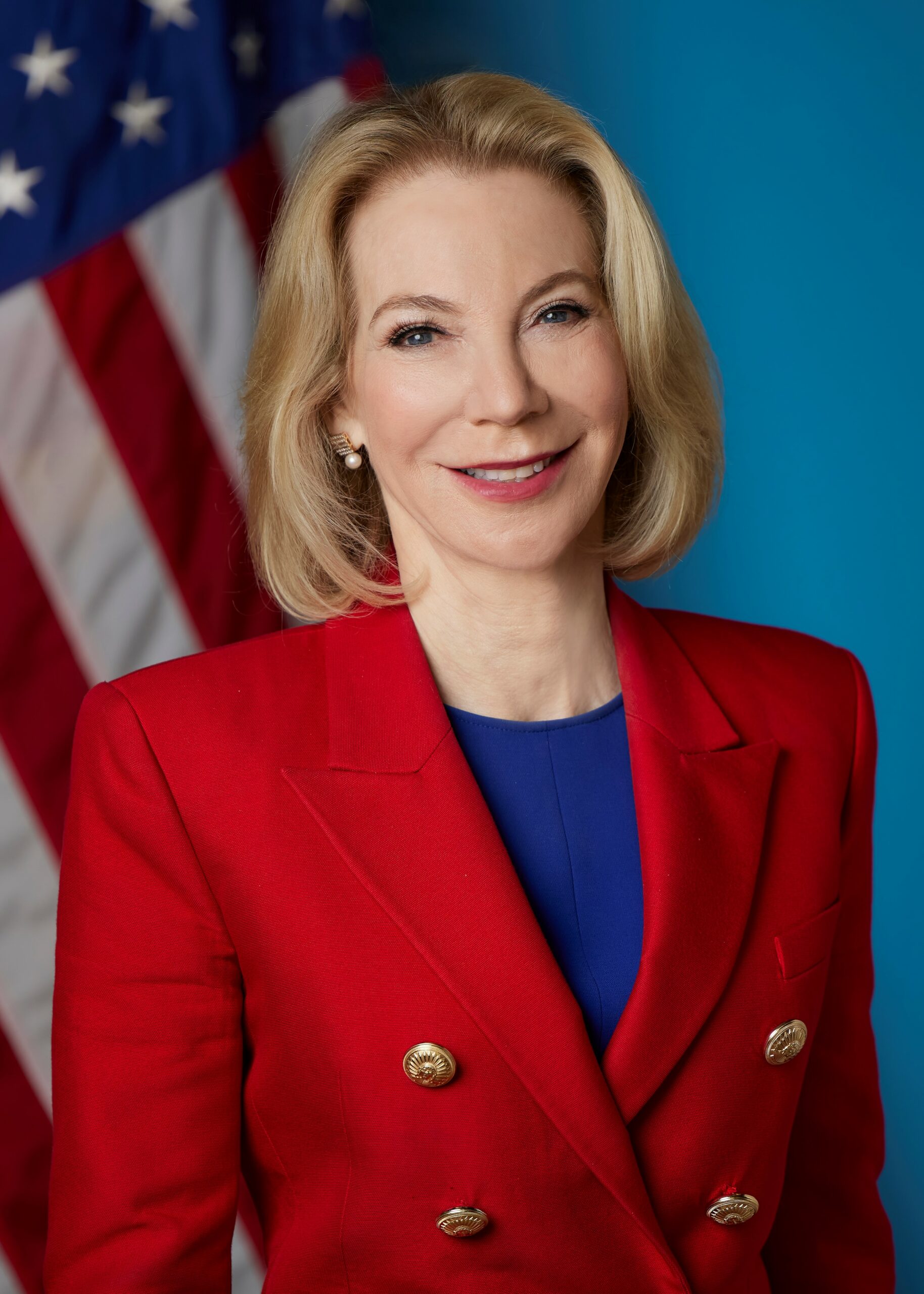

President Emerita Amy Gutmann Joins Penn MEDIATED as Faculty Advisor

Penn MEDIATED is honored to welcome one of the foremost scholars of democratic governance, Penn President Emerita Amy Gutmann, as a faculty advisor. The longest-serving president in the University of Pennsylvania's history and an appointee of both President Barack Obama and President Joe Biden.

Penn MEDIATED is honored to welcome one of the foremost scholars of democratic governance, Penn President Emerita Amy Gutmann, as a faculty advisor. The longest-serving president in the University of Pennsylvania's history and an appointee of both President Barack Obama and President Joe Biden, Professor Gutmann brings decades of civic leadership, as well as a rare combination of scholarly rigor and real-world impact, to Penn MEDIATED.

Executive Director Alex Engler reflects:

"From seminal scholarship on democratic deliberation; to advancing global dialogue as Penn's President; to strengthening alliances and combatting violent extremism as the U.S. Ambassador to Germany—the information ecosystem has been a guiding theme of her exemplary career. We are thrilled to welcome President Gutmann to the Center's leadership."

In joining Penn MEDIATED, Professor Gutmann says:

"The health of our democracy depends on the health of our information ecosystem. Seeking truth and understanding is not a utopian ideal; it is a prerequisite for a functioning republic. I am proud to join Penn MEDIATED and contribute to its vision of translating our rigorous research into democratic well-being."

As a scholar, Gutmann has made seminal contributions on democratic education, deliberative democracy, and the necessity of compromise for governance. As a member of the Knight Commission on Trust, Media, and Democracy, Gutmann contributed to the Commission's flagship report diagnosing the collapse of a shared information foundation as a core threat to American self-governance.

Penn MEDIATED Co-Director and Penn Integrates Knowledge Professor Duncan Watts observes:

"President Emerita Gutmann has a lifetime of experience transforming academic research into real world change—we are grateful for her help now, when it is more essential than ever to foster a healthier information ecosystem."

From 2022–2024, Gutmann served as the U.S. Ambassador to Germany, where she strengthened the U.S.-German relationship through expanded support for Ukrainian defense, increased trade, and bolstered resistance to extremism. She has since rejoined Penn and currently holds the Christopher H. Browne Distinguished Professorship in Political Science and is a Professor of Communication at the Annenberg School for Communication.

Christopher Yoo, Penn MEDIATED Co-Director and Imasogie Professor in Law and Technology, adds:

"Amy Gutmann has always been an authoritative voice and unflinching champion for a stronger and more deliberative democracy. We look forward to Amy opening our inaugural Penn MEDIATED conference at the end of August."

Professor of Communication, Annenberg School for Communication

President Emerita, University of Pennsylvania

Amy Gutmann is the Christopher H. Browne Distinguished Professor of Political Science at the School of Arts and Sciences and Professor of Communication at the Annenberg School for Communication. She is also President Emerita, having had a transformative impact as the longest-serving president of the University of Pennsylvania. From 2022–2024, Gutmann served as the U.S. Ambassador to Germany, where she strengthened the U.S.-German relationship through expanded support for Ukrainian defense, increased trade, and bolstered resistance to extremism.

As a scholar, Gutmann has made seminal contributions on democratic education, deliberative democracy, and the necessity of compromise for governance. Gutmann served as a member of the Knight Commission on Trust, Media, and Democracy (2017–2019), informing a groundbreaking report unpacking the crisis of democratic backsliding in the United States and recommendations for restoring public trust. Further, in 2009, President Obama appointed Gutmann as chair of the Presidential Commission for the Study of Bioethical Issues, where she helped guide national conversations on pressing public health challenges in the United States. Gutmann served as an Executive Committee member of the National Constitution Center and in 2018, she was named in Fortune Magazine's "World's 50 Greatest Leaders."